Disclosure Is Not Governance

If AI governance is real, it is inspectable. Boards need an auditable oversight cadence, CEO accountability, and disclosure review discipline, not a paragraph in the risk section.

Seminal Perspectives for Thursday, May 14, 2026. Many boards now approve filings that name AI risk. Far fewer boards can prove they govern AI through repeatable rituals.

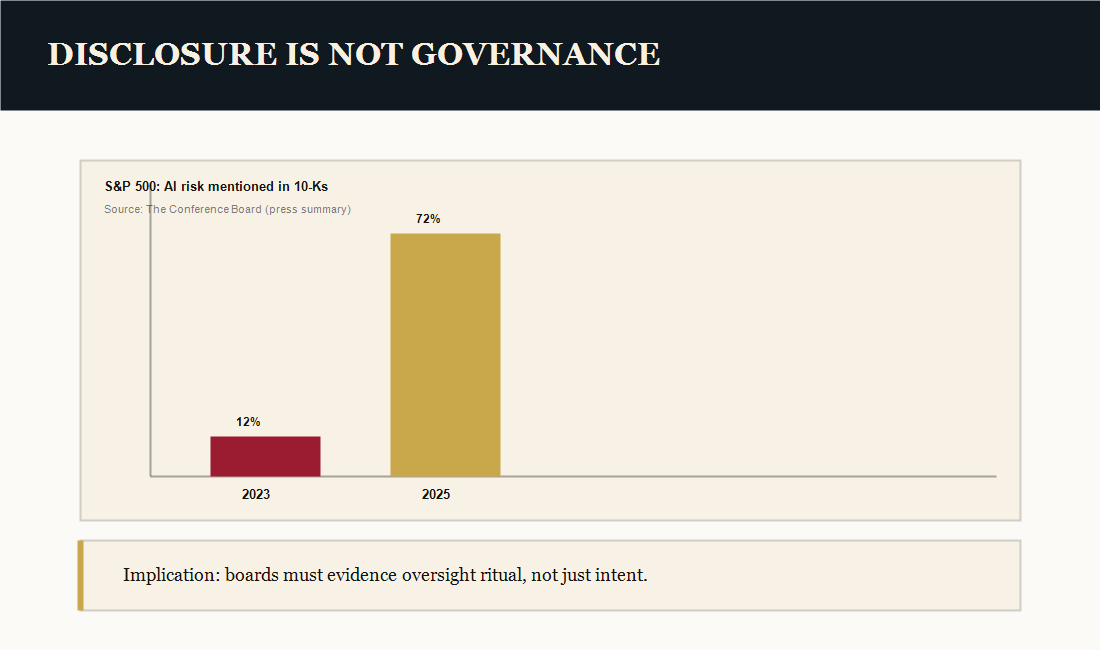

The fiduciary gap: disclosure outran governance ritual

Disclosure answers one question: do we recognize this risk? Governance answers a harder question: can we prove we oversee it?

The risk is not that a board discloses AI risk. The risk is that the disclosure becomes a public standard the board cannot support with repeatable evidence: a cadence, a reporting format, documented accountability, and a protocol for reviewing AI language before it becomes a commitment.

In practice, the board-facing question is not “are we using AI?†It is:

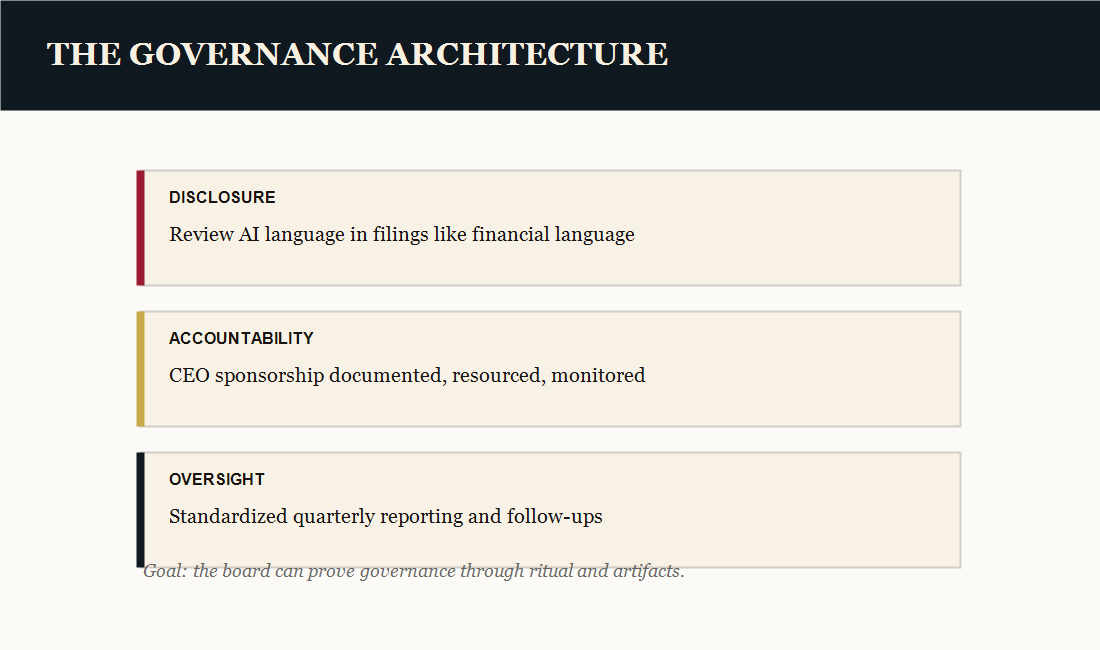

AI governance has three layers

Boards do not need to become the technical owners of AI. They do need a governance architecture that produces evidence. In the AI-First Culture work, that architecture has three layers.

1) Oversight: standardized reporting on what is deployed, what is changing, and what risk posture and maturity progress look like in board language.

2) Accountability: CEO sponsorship treated as a governed commitment with owners, resources, and an 18-month transformation window the board can monitor.

3) Disclosure: a review protocol so AI statements in public filings are vetted with the same seriousness as financial disclosures.

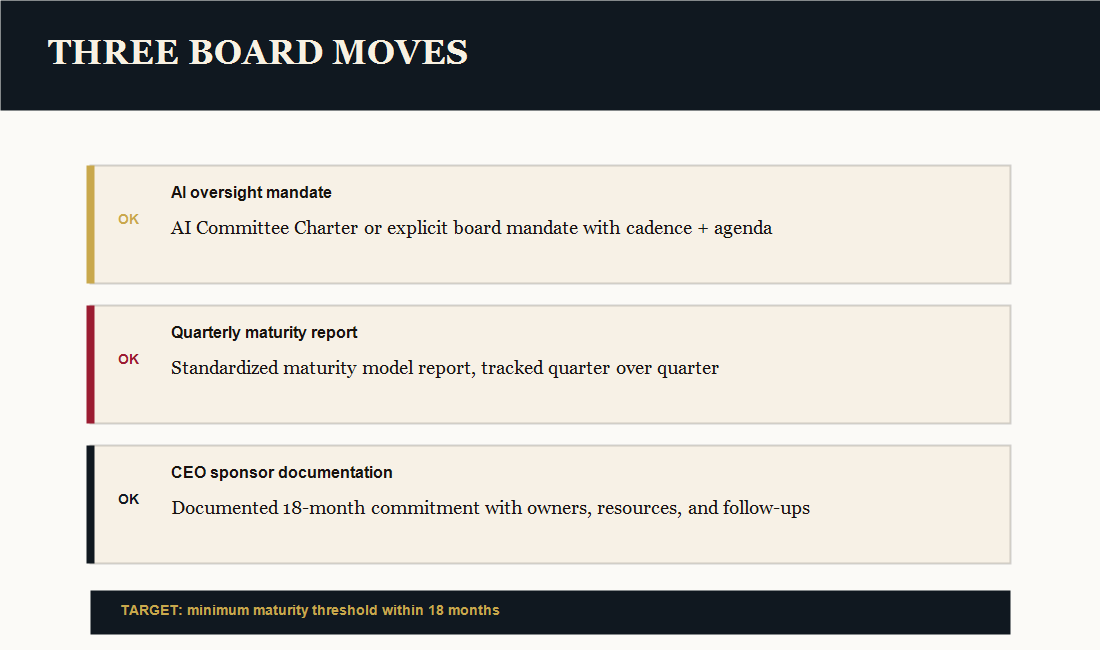

Three board moves that convert intention into ritual

Most boards do not need a new philosophy. They need a ritual redesign that makes oversight auditable.

- Establish an AI oversight mandate: an AI Committee Charter or explicit board mandate that defines what is reviewed, when, and in what format.

- Mandate a quarterly maturity report: a standardized maturity model report that the board receives every quarter, not ad hoc updates.

- Require CEO sponsor documentation: a governed artifact that captures the CEO’s transformation commitment, resourcing, and operating cadence.

The practical target is simple: reach a minimum maturity threshold within 18 months and be able to show evidence of progress without scrambling for slides the week before a filing.

Sources

# Sources (primary / authoritative) ## Touch Stone Publishers source base - Touch Stone Publishers, *AI-First Culture: The Fiduciary Governance Imperative* (Board of Directors white paper, May 2026). Internal project source: `whitepaper_board_ai-first-culture.docx` and the supporting packet in `Touch SOP\\ContentOps\\notebooklm_board_whitepaper_video_packet_20260513.md`. ## Public primary / authoritative sources cited for external claims - SEC Investor Advisory Committee draft recommendation PDF (current draft as of Nov 18, 2025): https://www.sec.gov/files/sec-iac-artificial-intelligence-recommendation-111825.pdf - SEC.gov remarks page for the Investor Advisory Committee meeting (Dec 4, 2025): https://www.sec.gov/newsroom/speeches-statements/uyeda-remarks-iac-120425 - The Conference Board (reported summary of S&P 500 AI risk disclosure rates; see also press coverage): https://www.cfobrew.com/stories/2025/10/08/companies-disclosed-ai-risks-on-10-ks

If your organization is deploying AI, you need a governance cadence your board can audit. The diagnostic takes under five minutes and identifies the most urgent intervention point in the CAIRO Framework.