Pillar I: Fiduciary Governance Architecture · February 2026

When the Chain Breaks:

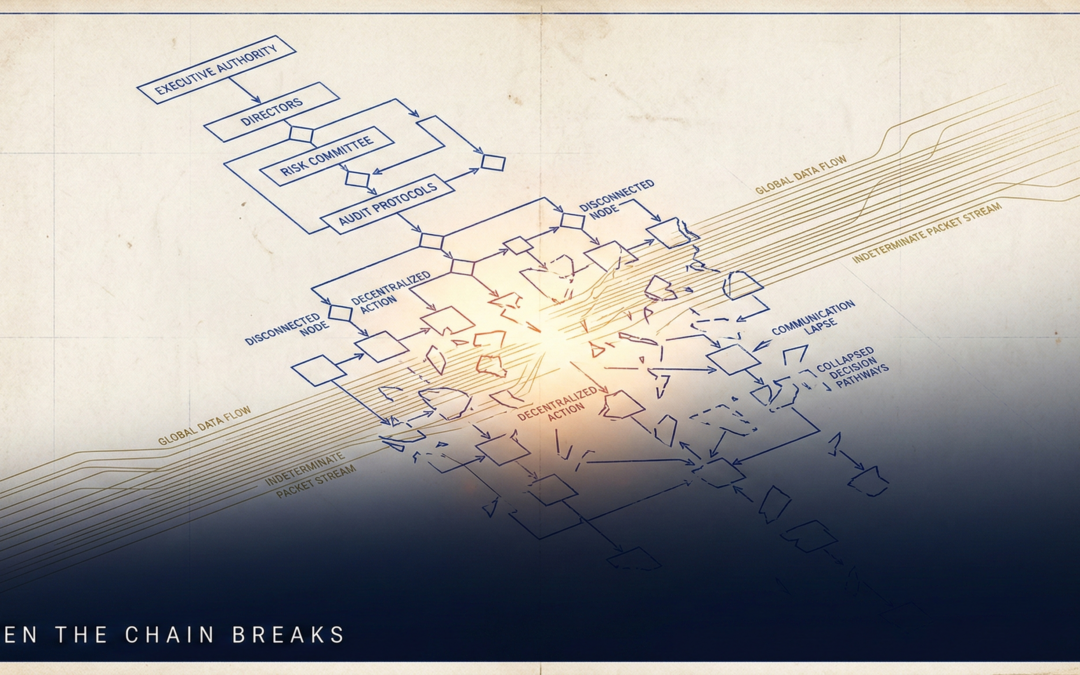

The Structural Collapse

Inside AI Governance Failure

The governance gap between autonomous AI execution and board-level accountability does not announce itself. It accumulates — silently, structurally, and with compounding legal consequence. Understanding why requires dismantling the assumption that hierarchical authority can govern machine-speed decisions.

of boards with no formal AI governance framework

have constituted a dedicated AI oversight committee

average lag from AI governance failure to board visibility

performance multiplier with structured AI governance

projected enterprise AI spend vs. 2000:1 oversight deficit

The Authority Gap No Quarterly Report Can Surface

There is a structural failure mode that organizations rarely identify until after it has already produced liability. It does not appear in the quarterly dashboard. It does not trigger an audit flag. It accumulates silently — in the distance between the decisions boards believe they are making and the decisions autonomous systems are actually executing on their behalf. By the time the gap becomes visible, the accountability chain has already broken.

The forensic evidence is unambiguous. A 64% plurality of boards currently operate without a formal AI governance framework.1 Only 6% have constituted a dedicated AI oversight committee. This is not a technology literacy problem. It is an authority architecture problem — and its legal and capital consequences are now converging in ways that narrow the governance response window to the current fiscal period.

Understanding why requires dismantling one persistent institutional assumption: that governance instruments designed for human decision chains can govern systems that execute at machine speed across thousands of simultaneous decision nodes. They cannot. When that assumption remains unchallenged, organizations do not govern their AI deployments — they ratify outcomes they had no structural capacity to prevent.

Governance instruments built for human decision chains were never engineered for systems that execute at machine speed across thousands of simultaneous decision nodes.

Touch Stone Decision Architecture Framework™

Three Failure Modes with Consistent Forensic Regularity

McKinsey's analysis of organizations with structured AI governance platforms established that those organizations are 3.4 times more likely to achieve high operational effectiveness.2 The inverse finding is the more instructive data point: organizations without governance architecture are not simply less effective — they are operating with authority structures that cannot account for their own outputs.

The first failure mode is Delegation Without Definition. Boards approve AI deployments at the strategy layer and assume that operational implementation carries corresponding governance authority. It does not. Delegating execution to an autonomous system without defining the boundaries of that delegation — the decision scope, the escalation triggers, the outcome accountability chain — creates a governance vacuum that no policy document can retroactively fill.

The second is Oversight Architecture Mismatch. Legacy governance instruments operate on cycles calibrated to human decision velocity: quarterly reviews, monthly dashboards, annual audits. AI systems operate on execution cycles measured in milliseconds. A quarterly audit of a system making 40,000 credit decisions per hour does not constitute oversight. It constitutes historical documentation of outcomes the board had no structural capacity to influence.

The third, and most legally consequential, is Red Flag Blindness. Delaware's Caremark doctrine — extended to AI contexts through the SolarWinds cybersecurity precedent — imposes personal director liability not only for failing to implement governance systems, but for failing to act on documented risk signals those systems surface.3 A board that receives AI risk signals and cannot demonstrate a structured response protocol has satisfied neither prong of Caremark compliance.

When AI systems operate faster than governance review cycles, the board is not governing — it is ratifying outcomes it had no structural capacity to prevent.

The Legal Architecture of the Governance Gap

The Caremark doctrine operates through two independent prongs — either is sufficient to establish board liability. Prong One addresses structural failure: a board that has implemented no information system capable of bringing critical AI risks to its attention has failed its oversight obligation before a single incident occurs. The absence of governance architecture is itself the violation.

Prong Two addresses the more invidious failure: a board that has implemented a governance system but consciously failed to monitor it. The Deloitte AI Risk Survey (2025) identified that the average lag between an AI governance failure and board-level visibility is 12–18 months.4 A governance system with an 18-month signal lag is not a governance system. It is a post-incident notification mechanism dressed in the language of oversight.

The Marchand v. Barnhill (2019) Delaware Supreme Court standard adds a third governance dimension specific to organizations where AI is mission-critical.5 Where autonomous systems are central to the business model — embedded in financial services, healthcare diagnostics, employment decisions — the board's oversight obligation is subject to heightened scrutiny. This is an active legal standard that Delaware courts have already signaled they will apply to AI governance failures.

The average lag between an AI governance failure and board-level visibility is 12–18 months. A governance system with an 18-month signal lag is not oversight — it is post-incident notification.

Threshold Governance vs. Approval Governance

The Touch Stone Decision Architecture Framework™ identifies Decision-Rights Architecture as the foundational instrument for closing the governance gap. Decision rights are not approval protocols — they are structural definitions. They specify which decisions the organization reserves for human judgment, which it delegates to autonomous execution, and which require human-AI collaborative validation before output becomes actionable.

The architecture operates through threshold-based governance rather than approval-based governance. Approval-based governance requires human authorization before action — structurally incompatible with systems executing in real time. Threshold-based governance defines the parameters within which autonomous action is permitted, the signals that trigger mandatory escalation, and the accountability chain that owns outcomes when thresholds are crossed. The board does not approve each decision — it approves the decision parameters. That is the structural distinction between meaningful governance and the pretense of it.

JPMorgan Chase's implementation of structured AI governance frameworks generated $1.5 billion in attributable value in 2024 — not because governance was applied as a compliance layer, but because decision-rights clarity accelerated deployment velocity by eliminating ad hoc approval friction.6 The competitive case for governance architecture is not separate from the legal case. It is the same case expressed in a different currency.

The governance gap closes through three sequential instruments: a formally chartered AI oversight committee, a board-approved decision-rights boundary definition, and an AI risk information pipeline independent of management reporting.

The Convergence That Narrows the Decision Window

The governance imperative has intensified in the current period because three distinct pressure systems are converging simultaneously. Agentic AI systems are exacerbating the velocity gap, as algorithmic execution now operates at a tempo that executive judgment hierarchies were never designed to match. Physical infrastructure constraints — including a 17% kinetic power cap realignment — are reshaping the capital deployment calculus for AI at the infrastructure layer.

And supply chain legal exposure is transitioning from voluntary disclosure frameworks to mandatory due diligence with extraterritorial effects. The invalidation of IEEPA tariffs by the U.S. Supreme Court in February 2026, and the imposition of replacement Section 122 surcharges, has introduced structural disruption into supply chain cost assumptions precisely as global due diligence mandates — France's Loi de Vigilance, the UK Modern Slavery Act — are extending civil liability to parent companies for violations within their full supply chain. The governance response window is not closing gradually. It has narrowed to the current fiscal period.

Boards that understand this convergence will respond with structural precision: formal committee constitutionalization, board-approved decision-rights boundary definitions, and AI risk information pipelines independent of management reporting. Those that treat these as aspirational commitments rather than executable structural changes will discover, as their predecessors did with cybersecurity governance, that the doctrine that creates liability does not wait for governance readiness.

When an organization deploys an autonomous AI system, it delegates execution authority but retains full governance accountability. The absence of a formal governance architecture does not reduce that accountability — it amplifies the personal liability exposure of every director who approved the deployment without one. Execution may be delegated to a machine. Accountability cannot be.

Governance responsibility follows execution authority downward through the organizational hierarchy. Where authority is delegated, accountability travels with it.

Governance accountability is non-delegable. Only execution may be delegated to autonomous systems. The board that delegates without governing has not reduced its fiduciary exposure — it has maximized it.

- NACD Board Practices Report, 2025.

- Gartner AI Governance Study, 360-Organization Benchmark, 2024.

- In re SolarWinds Corp. Securities Litigation, S.D.N.Y., 2023; Delaware Caremark doctrine (In re Caremark Int'l Inc. Derivative Litigation, Del. Ch. 1996).

- Deloitte AI Risk Governance Survey, 2025.

- Marchand v. Barnhill, Del. Sup. Ct., 2019.

- JPMorgan Chase Annual Report, 2024.