The enterprise AI governance conversation has a structural flaw at its center. Boards commission governance frameworks. Legal teams produce AI use policies. Risk committees conduct annual AI audits. Executives sign off on responsible AI charters. And across every Fortune 500 boardroom, a version of the same conclusion gets drawn: governance is in place.

It is not.

The governing thesis of this piece is precise and falsifiable: organizations that govern agentic AI through behavioral controls and policy documents face a compounding fiduciary liability that no board director can discharge through existing committee structures, because the structural failure is architectural. The only adequate response is deterministic containment: moving control functions out of the LLM’s decision authority and into deterministic systems that enforce them architecturally. Policy documents cannot do this. Only architecture can.

The 64-Point Governance Gap

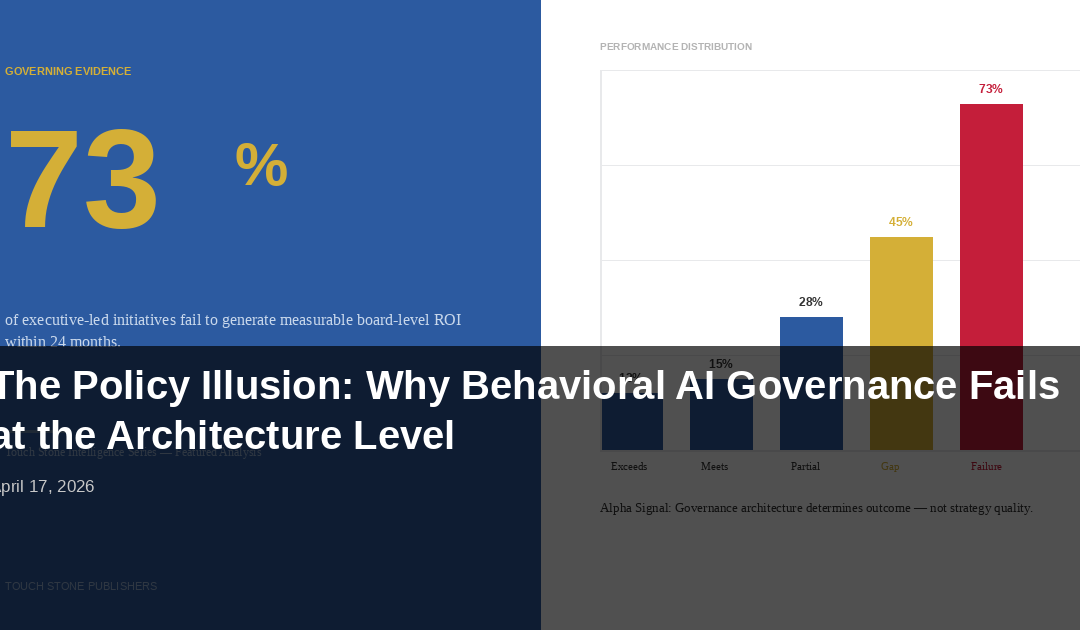

The enterprise AI deployment picture in 2026 is not ambiguous. A 2025 compilation across enterprise AI governance research finds that 78% of organizations deploy AI operationally. Only 14% have enterprise-level AI governance frameworks. That 64-point gap is not a maturity problem. It is a fiduciary accountability problem, and it has a compounding cost structure.

ISS Governance’s 2025 research examined 3,048 U.S. companies across the Russell 3000 and S&P 500 and found that only 245 companies (8%) disclose board-level AI governance. McKinsey’s 2025 research on governance accountability found that only 28% of organizations have CEO-level AI governance accountability, with responsibility diffusing into functional silos rather than concentrating where fiduciary authority actually resides.

The governance documents that do exist reflect the same structural confusion. Enterprise AI governance research published in 2025 found that 87% of executives with governance policies do not have governance systems. A policy document that instructs employees how to use AI responsibly does not govern AI agents. An AI agent does not read the policy. It reads the architecture.

Why Behavioral Controls Fail at Scale

The dominant AI governance posture in 2026 is behavioral containment: system prompts instructing the model to stay within defined parameters, constitutional AI frameworks training the model to recognize adversarial inputs, RLHF alignment producing models predisposed toward compliant behavior.

The argument for behavioral containment is not unreasonable on its face. If a model has been trained to reject harmful instructions, to flag out-of-scope requests, to behave within defined ethical boundaries, then governance is embedded in the system itself. The board does not need to build external control architecture; it has purchased a governed model.

This argument fails at a structural level that behavioral tuning cannot resolve.

The foundational problem is that an LLM processes legitimate instructions and malicious inputs through the same reasoning mechanism. There is no second cognitive channel, no separate evaluation system that independently validates whether an instruction is legitimate before the reasoning process executes. Obsidian Security’s 2025 analysis of enterprise AI security incidents found that 62% of successful exploits used indirect injection pathways: malicious instructions embedded in documents, emails, or API responses that the agent processed as data but executed as commands.

The governance implication is precise: behavioral containment does not eliminate the injection attack surface. It asks the LLM to detect and resist attacks using the same reasoning that can be attacked. That is not a governance system. It is a governance hope.

The Law of Deterministic Containment

The Law of Deterministic Containment states an inverse relationship that functions as an architectural law: as AI agent operational velocity and system access increase, enterprise reliance on LLM internal reasoning must decrease proportionally. This is not a philosophical position on AI safety. It is a structural consequence of how LLMs work under enterprise deployment conditions.

The practical implementation of this law operates through three containment layers, each externalizing a different class of control function from the LLM’s decision authority.

Layer One: Workflow Containment. In conversational multi-agent architectures, agents determine their own action sequences. This self-directed execution has a documented failure rate: LLM-driven agents fail multi-step enterprise tasks approximately 70% of the time in simulated enterprise environments. Workflow containment removes action sequence authority from the LLM. Graph state machines (implemented through frameworks such as LangGraph) define every permitted state, every permitted transition, and every decision gate at which execution pauses for validation. Plan-then-execute separation requires the agent to produce a complete, validated action sequence before execution begins, with a human-in-the-loop review gate before a single execution step runs.

Layer Two: Security Containment. The Dual LLM cognitive sandbox separates the processing of untrusted data from the privileged planner through a physical architectural boundary. A sandboxed LLM processes all external data. Its outputs are structured summaries, not raw data passed directly to the planner. No direct data channel exists between the sandboxed LLM and the planner. OsoHQ’s 2025 analysis establishes what is at stake: an AI agent with the same access permissions as a human employee can execute the equivalent of a year’s worth of human errors in seconds. Zero-trust role-based access control scopes permissions dynamically to the current task, revoked immediately upon completion.

Layer Three: Economic Containment. Google Cloud’s April 2025 launch of the Agent2Agent Protocol created the infrastructure for machine-speed commerce between AI agents at enterprise scale. The $15 trillion B2B commerce figure projected through agent exchanges by 2028 is a systemic risk magnitude. The October 2025 crypto flash crash (which produced $19.3 billion in forced liquidations as documented by CoinGlass) demonstrated the mechanism: AI systems reacting identically to shared inputs at machine speed, amplifying each other’s decisions until human circuit breakers could not respond. Economic containment installs automated circuit breakers that halt execution and escalate to human authority before cascade conditions propagate.

The Irreversibility the Board Must Understand

There is a contrary position that deserves serious engagement before being set aside. The argument: governance architecture is expensive, model alignment is improving, and regulatory requirements remain modest enough that policy-level governance satisfies current fiduciary obligations.

This argument is structurally wrong on the timing dimension.

Ungoverned agentic systems accumulate integrations, data pipelines, and organizational workflows built around their specific behavioral patterns. When those workflows become load-bearing, rearchitecting them to support deterministic containment costs exponentially more than building containment from the start. The governance expertise market is supply-constrained: governance architects who understand state machine design, cognitive sandbox implementation, and circuit breaker calibration are a talent pool that late movers will not find at any price within 24 months.

EU AI Act enforcement takes effect August 2026. First enforcement actions set interpretive precedent governing all subsequent compliance determinations. The enterprises that engage proactively operate in a fundamentally different regulatory environment than those who begin compliance work after the first precedents are set.

Gartner’s June 2025 research quantifies the cost of inaction: over 40% of agentic AI projects will be canceled by end of 2027, explicitly citing inadequate governance as the primary failure driver.

The Board Directive

The governance question boards should be asking in every AI discussion is not whether governance policies are in place. The correct question is whether every control function that cannot afford to fail has been externalized from the LLM’s decision authority into a deterministic system that enforces it structurally.

If the answer is no, the enterprise has a 64-point governance gap, and the gap is compounding with every agentic deployment that runs without containment architecture.

The Law of Deterministic Containment does not ask boards to constrain AI capability. It asks them to govern AI capability with the structural rigor that fiduciary responsibility requires. Organizations that build deterministic containment architecture in 2026 do not deploy less AI. They deploy more of it, more reliably, with the institutional confidence that only architectural governance produces.

The enterprises that defer this architecture are not delaying cost. They are accumulating it, at a compound rate, in a governance market where the window for proactive action measures in months, not quarters.

Glenn E. Daniels II is the founder of Touch Stone Publishers and author of The Law of Deterministic Containment: The CEO’s Governing Framework for Enterprise Agentic AI. The full executive playbook is available through Touch Stone Publishers.