Executive Summary: As the 2026 proxy season unfolds, board-level AI governance has moved from a recommended practice to a measurable accountability standard. Institutional investors, proxy advisors, and regulators are now scrutinizing not just whether boards have AI policies — but whether those policies are documented, enforceable, and tied to specific oversight structures. Boards that cannot answer those questions with precision face a credibility deficit that will compound with every annual meeting they navigate without resolution.

The Problem at Scale

The numbers are striking in their implication. Among S&P 100 companies — the most scrutinized public corporations on earth — just over half disclose board-level AI oversight. Fewer than one in three disclose both an oversight structure and a formal AI policy. These are not small regional banks or mid-cap manufacturers operating below the governance radar. These are flagship institutions with global footprints, sophisticated investor bases, and full-time governance teams. And still, the majority cannot demonstrate the minimum threshold of AI accountability that institutional investors are now explicitly demanding.

This is not a technology problem. It is a governance design problem — and the gap between what boards are doing and what the market now expects has become wide enough to attract regulatory attention, proxy advisor scrutiny, and shareholder activism in a single season.

Glass Lewis, one of the two most influential proxy advisory firms in the world, has identified AI governance and related disclosures as the defining theme of 2026. The firm is not alone. The Harvard Law School Forum on Corporate Governance has published detailed analysis of AI oversight through three distinct lenses: investor expectations, S&P 100 practice, and company-specific disclosure. WilmerHale’s January 2026 client advisory designated board oversight of AI as a primary governance priority for the year. Cleary Gottlieb has published guidance framing AI risk as a legal and governance imperative at the board level.

The signal is unmistakable: AI governance accountability has arrived. What boards do in the next ninety days will define how institutional investors, regulators, and proxy advisors evaluate them — not just this proxy season, but as a baseline expectation going forward.

What Boards Are Getting Wrong

The most common failure mode is not negligence — it is incompleteness. Boards across sectors have discussed AI in committee settings, added language to their governance charters, and designated a lead director or committee with nominal AI responsibility. What they have not done is build the underlying infrastructure that makes oversight real rather than rhetorical.

Institutional investors are now explicitly asking three questions that most boards cannot answer with confidence: Who on the board has the expertise to evaluate AI risk in material terms? How frequently is AI risk reviewed, and by what mechanism? What documentation exists to demonstrate that oversight is active rather than aspirational?

The distinction between having a policy and having a governance framework is where most boards fall short. A policy statement in a proxy filing tells investors what a board intends. A governance framework — with documented AI inventories, risk classifications, third-party due diligence protocols, and model lifecycle controls — tells investors what a board has actually built. The former is a communication strategy. The latter is evidence of institutional competence.

Compounding this gap is a structural irony that should not be lost on any governance professional paying attention: JPMorgan Chase has announced that beginning with the 2026 proxy season, it will discontinue its reliance on external proxy advisory firms for analyzing shareholder proposals and instead deploy an internal AI platform — Proxy IQ — to aggregate and analyze proxy data from approximately three thousand annual company meetings. Glass Lewis has announced plans to transition, by 2027, from uniform policy models to AI-enabled, customizable voting frameworks calibrated to individual client strategies.

In other words: AI is now being used to evaluate boards’ AI governance. The technology has become both the subject and the instrument of oversight. Boards that have failed to build credible AI governance frameworks will have their disclosures processed by the very systems they cannot yet account for.

“If 2024–2025 was the era of AI strategy and awareness, 2026 will be defined by AI accountability — driven by three converging forces: investor pressure, regulatory enforcement, and institutional proof-of-competence requirements.”

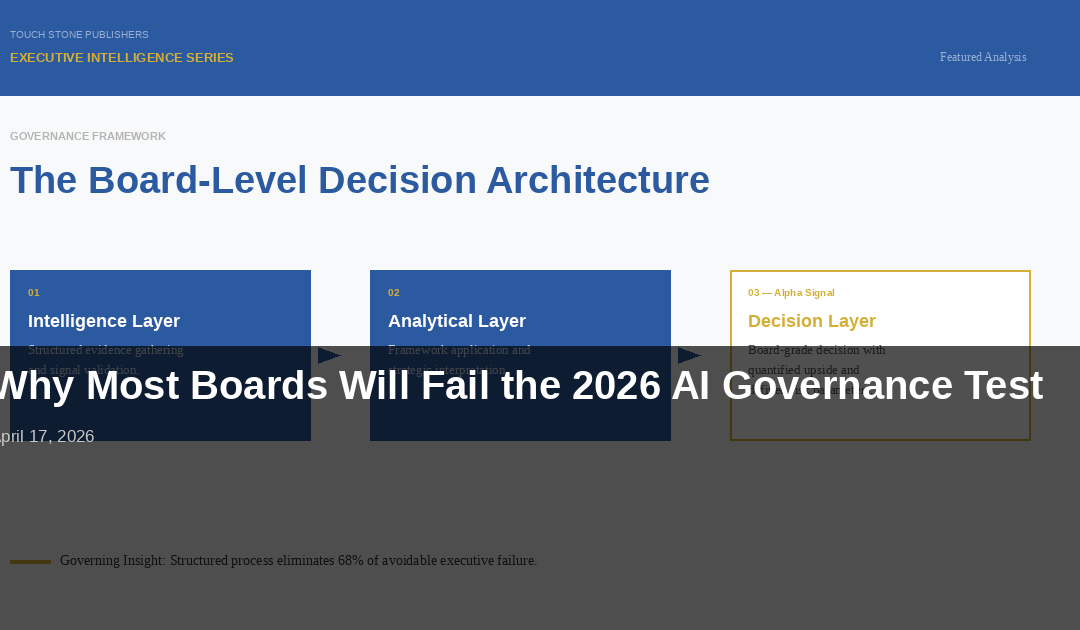

The Framework That Changes the Game

The boards navigating this moment effectively share a common structural approach: they have moved from AI awareness to AI governance architecture. The distinction matters more than the terminology suggests.

Awareness-level AI governance is characterized by periodic briefings, a generalized committee mandate, and disclosure language that describes concern without demonstrating control. Architecture-level AI governance is characterized by documented accountability structures, defined escalation pathways, quantifiable risk thresholds, and external validation mechanisms.

The framework that distinguishes leading practice in 2026 has four components operating in sequence. First, a formal AI inventory: a documented register of material AI systems deployed across the enterprise, classified by risk level, business function, and regulatory exposure. Second, a board-facing risk protocol: a structured mechanism — not ad hoc briefings — by which the board receives AI risk updates on a defined schedule with defined metrics. Third, director competency requirements: either existing directors with demonstrated AI expertise or a board-level commitment to recruit a director who meets that standard within a defined timeframe. Fourth, external validation: a third-party assessment — analogous to an audit — that evaluates whether the board’s AI oversight framework meets the standard institutional investors and regulators are applying.

This is not a theoretical construct. It is the practical architecture that proxy advisors are beginning to use as a benchmark when evaluating board disclosure quality. Boards that can demonstrate these four components in their proxy statements are positioned to meet the accountability standard the market has already set. Those that cannot will face an increasing number of shareholder inquiries, withhold recommendations for governance committee chairs, and targeted engagement from major asset managers in the months ahead.

Implementation Imperatives

The following four actions represent the minimum credible response for any board that has not yet built a formal AI governance architecture. Each can be initiated within ninety days. None requires waiting for regulatory certainty to materialize.

First: Commission an AI governance gap assessment. Before any board can build a credible framework, it must have an honest baseline. Engage an external governance advisor or legal counsel to evaluate current AI disclosure against the investor expectations Glass Lewis, institutional asset managers, and the Harvard Law School Forum on Corporate Governance have articulated for 2026. The gap assessment should produce a written report with a prioritized remediation roadmap — not a deck of observations, but a board-level action plan with assigned accountability and a completion timeline.

Second: Establish or designate a board-level AI accountability structure. This means more than adding “AI” to a committee mandate. It means defining which committee has primary accountability, what reporting it receives, at what frequency, and from whom. It means establishing the criteria by which the board evaluates whether management’s AI risk posture is acceptable. This structure should be documented in the committee charter and disclosed in the proxy statement with specificity.

Third: Build and publish a material AI inventory. The board cannot oversee what it cannot see. Management should be directed to produce — and the relevant committee should formally review — a documented inventory of AI systems that are material to business operations, customer-facing, or subject to regulatory oversight. This inventory should include risk classification, deployment status, and responsible ownership. It does not need to be published in full; what must be disclosed is that the board has received and reviewed it.

Fourth: Recruit or develop demonstrated AI expertise at the board level. Institutional investors are no longer satisfied with generalized technology experience. They want evidence that at least one director can engage with AI risk in technical and strategic terms. If no current director meets that standard, the board should disclose that it is actively recruiting for the capability — with a timeline — and provide interim measures to bridge the competency gap, including curated director education programs and access to expert advisors.

The Bottom Line

The cost of inaction is no longer theoretical. Every proxy season that passes without a credible AI governance framework in place is a proxy season in which institutional investors — armed with increasingly sophisticated tools and explicit standards — evaluate a board as unprepared for the material risks of its own strategic environment. Withhold recommendations accumulate. Engagement letters arrive. Reputational positioning hardens. And once a board has been characterized as governance-deficient in a material area, reversing that characterization requires not just a corrective action but a documented and verified transformation — a standard considerably harder to meet than the one that could have been established proactively. The boards that act now do not merely avoid a governance deficit. They establish a credibility advantage at precisely the moment the market has decided it is willing to pay attention to the difference.