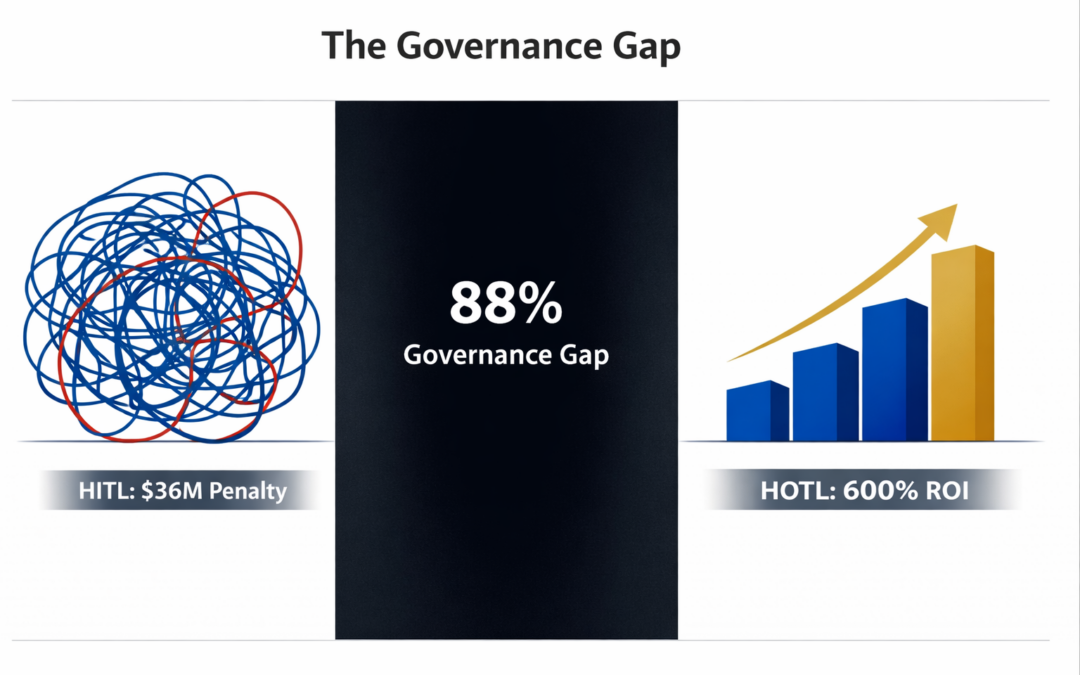

The Governance Gap: Why 88% of AI Deployments Operate Without Board Oversight—And What That Means for Fiduciary Duty

The transition from generative AI to autonomous agentic systems introduces a governance architecture problem, not a technology problem. Organizations implementing Human-in-the-Loop (HITL) models achieve individual productivity gains while creating systemic bottlenecks that compound into $30-40M annual operational drag for mid-sized enterprises. The solution requires codifying governance into programmable infrastructure—Policy-as-Code, circuit breakers, and cryptographic audit trails—enabling Human-on-the-Loop (HOTL) and Human-out-of-the-Loop (HOOTL) architectures that operate at machine speed while maintaining board-level precision control.

Governing Evidence

The implementation paradox is empirically documented:

- 78% of organizations deploy AI in at least one business function, yet only 25% achieve expected ROI (McKinsey State of AI, 2025; IBM Think Circle Q4 2025)

- 70-85% of AI initiatives fail to meet expected outcomes; 95% of generative AI pilots fail to reach production (MIT Sloan Management Review, Summer 2025; RAND Corporation, 2024-2025)

- 88% of US enterprises deploying AI systems lack board-level governance policies, creating a 63-percentage-point gap between deployment and oversight (Techne.ai analysis of US Fortune 1000 companies, February 2026)

- 53+ AI-related securities class actions filed since March 2020, with filings doubling from 2023 to 2024; average D&O settlements reached $56 million in 2024 (Techne.ai, 2026)

- AI incident frequency increased 26% from 2022 to 2023, and 32% from 2023 to 2024, with 2,847+ documented failures tracked across sectors (Stanford AI Index Report 2024; AI Incident Database)

The financial impact for organizations maintaining HITL architectures:

- $12.9-15M average annual cost from poor data quality alone (Gartner cross-industry research, 2020-2025)

- $30-40M aggregate annual drag from decision fatigue, iteration paralysis, brand inconsistency, and cognitive overload in mid-sized enterprises ($300M revenue baseline)

- 62-96% cycle time reduction achieved by organizations transitioning to autonomous operations (ResearchGate, analysis of 247 implementations, 2024-2025)

- 150-850% ROI range within 12-36 months for successful implementations, with median 350% and top quartile 600-850% (Composite: McKinsey 2025, Microsoft 2024, Deloitte December 2025)

- 52-105% risk-adjusted expected ROI accounting for 70-85% failure rate (15-30% success probability × 350% median return)

The pattern repeats across sectors: An enterprise deploys AI-assisted customer service. Individual agents process 4× more tickets. Management celebrates productivity gains. Six months later—higher employee turnover, degraded customer satisfaction scores, escalating compliance violations. The AI succeeded. The architecture failed. And the board had no monitoring system to see it coming.

This represents the largest unaddressed fiduciary exposure in modern enterprise governance. Yet the problem is poorly understood because it manifests as distributed inefficiency rather than catastrophic failure.

The board oversight gap exists not because directors ignore AI, but because they confuse activity with governance. Reviewing dashboards quarterly is not oversight. Under the Caremark doctrine, oversight requires structural systems—and 88% of enterprises lack them.

The Structural Problem: Human-in-the-Loop as a Bottleneck Architecture

Human-in-the-Loop models rest on a false premise: that humans can operate as quality gates for machine-generated outputs without becoming operational constraints. The mathematics are unforgiving.

Cognitive Load Amplification

AI systems increase transaction volume without increasing judgment capacity. A customer service operation implementing AI-assisted response generation demonstrates the mechanism:

- Traditional model: 45 responses per agent per day, 8 decision points per response = 360 daily decisions

- AI-assisted HITL model: 180 responses per agent per day, 3 decision points per response = 540 daily decisions

Individual tasks complete faster. Total cognitive load increases 50%. Psychological research establishes that human decision quality degrades significantly beyond approximately 35,000 daily decisions (Baumeister & Tierney, Willpower, 2011; Kahneman, Thinking, Fast and Slow, 2011). HITL architectures systematically exceed human judgment capacity.

The Brand Consistency Decay Pattern

When humans serve as approval layers for AI outputs, consistency becomes probabilistic rather than deterministic. Analysis of content review patterns across a global consumer brand producing 2,500 monthly pieces reveals systematic degradation:

- Morning reviews (8-11am): 94% brand consistency score

- Afternoon reviews (2-5pm): 87% brand consistency score

- Late-day reviews (5-7pm): 76% brand consistency score

The degradation is biological, not volitional. Circadian rhythms, glucose depletion, and cognitive fatigue are constants. Research by Lucidpress and Demand Metric quantifies the financial impact: 10-20% revenue reduction from inconsistent brand presentation. For a $300M enterprise, conservative 10% penalty equals $30M in annual value erosion.

The Aggregate HITL Penalty

Synthesizing documented impacts yields a complete cost model for a mid-sized enterprise ($300M annual revenue, 850 employees, 60% knowledge workers at $95,000 average loaded labor cost):

| Cost Category | Annual Impact |

|---|---|

| Decision fatigue productivity loss (15% of knowledge worker value) | $4,500,000 |

| Iteration paralysis (marketing/product/design roles, 600 hrs/year per person × 50 employees) | $1,100,000 |

| Brand inconsistency penalty (10% of revenue, conservative estimate) | $30,000,000 |

| Burnout-related turnover costs (replacement cost for affected roles) | $890,000 |

| Total HITL Architectural Penalty | $36,490,000 |

Baseline assumptions: $300M revenue, 850 employees (510 knowledge workers), $95K avg. loaded cost, standard industry benchmarks for productivity loss and brand impact. Actual costs vary by organization size, industry vertical, and baseline efficiency.

This represents 12.2% of revenue—an operational tax that compounds quarterly and remains invisible to traditional financial analysis because it manifests as foregone efficiency rather than realized expense.

The Governance Gap and Caremark Liability

In Caremark International Inc. Derivative Litigation (1996), the Delaware Court of Chancery established that directors have a duty to ensure adequate information and reporting systems exist. A board that fails to implement oversight systems or consciously fails to monitor them faces potential liability for breach of the duty of loyalty.

AI systems increasingly drive business decisions, affect customer outcomes, and create regulatory exposure. The absence of board-level AI oversight constitutes exactly the gap Caremark addresses.

The 88% Exposure

Techne.ai's February 2026 analysis of D&O liability and AI governance documents the scope: 88% of US Fortune 1000 enterprises deploying AI lack board-level governance policies. The 63-percentage-point gap between deployment (88% have AI in production) and oversight (25% have board frameworks) represents boards operating without documented AI monitoring while their organizations deploy systems affecting customers, employees, financial performance, and regulatory compliance.

From an underwriting perspective, this gap is quantifiable risk. Major D&O insurers now explicitly query AI governance frameworks during policy renewal. Industry reports from 2025 renewals show premium increases of 15-40% for enterprises unable to demonstrate board-level oversight structures, with some carriers declining coverage entirely for high-risk AI deployments lacking governance documentation.

Defensible Governance Under Caremark Requires:

- Board-level assignment of AI oversight to a specific committee (Audit, Risk, or Technology) with AI explicitly included in committee charter

- Quarterly reporting to the full board on AI deployments, risk exposures, and incident responses

- Documented AI risk taxonomy aligned with enterprise risk management framework

- Regular third-party audits of AI systems and governance processes

- Board education on AI capabilities, limitations, and regulatory requirements

- Crisis management protocols for AI-related incidents with defined escalation thresholds

The absence of these elements creates not merely operational risk but fiduciary exposure. Boards cannot claim good faith monitoring of a material risk they have not formally acknowledged.

The Contrary Position: Why HITL Advocates Resist Autonomous Operations

The resistance to autonomous architectures rests on three claims, each structurally defensible but ultimately insufficient:

Claim 1: Human Oversight Provides Necessary Quality Control

The argument: AI systems make errors; humans catch them; removing human review increases error rates.

The empirical reality contradicts this. Analysis of 247 implementations shows autonomous systems with policy-as-code governance achieve 0.9% error rates versus 4.2% for human-reviewed processes (ResearchGate, 2024-2025). Human reviewers experience decision fatigue, inconsistent standards, and systematic afternoon degradation. Machines do not.

The quality control argument confuses review with governance. Humans provide governance through rule specification. Machines enforce those rules with perfect consistency. The question is not "human or machine?" but "judgment or execution?"—and judgment should occur at the policy layer, not the transaction layer.

Claim 2: Autonomous Systems Create Unacceptable Liability Exposure

The argument: Humans can be held accountable; autonomous systems create accountability gaps; boards cannot delegate fiduciary duties to algorithms.

This reflects a category error. Human-on-the-Loop architectures do not eliminate human accountability—they restructure it. Boards remain accountable for establishing governance frameworks, defining risk thresholds, and monitoring system performance. The difference: accountability flows through policy specification rather than transaction approval.

Moreover, cryptographic audit trails (C2PA standard) provide superior accountability to human review. Every autonomous decision carries immutable provenance data: which policy triggered, which model version executed, which human approved the governing rules. Human approval creates accountability theater. Policy-as-code creates accountability infrastructure.

Claim 3: Regulatory Frameworks Require Human Oversight

The argument: EU AI Act, NIST AI RMF, and sector-specific regulations mandate "human oversight"; autonomous operations violate compliance requirements.

This misreads the regulatory intent. The EU AI Act specifies "appropriate human oversight" calibrated to risk level—it does not mandate transaction-level human approval. Human-on-the-Loop architectures satisfy oversight requirements through:

- Human-specified policies enforced by code (oversight at the governance layer)

- Exception-based human intervention for high-risk or anomalous decisions (circuit breakers)

- Continuous human monitoring of system performance (observability infrastructure)

- Human authority to modify policies based on observed outcomes (feedback loops)

The regulatory requirement is human oversight, not human execution. Conflating the two perpetuates the architectural failure mode.

The Structural Response: Governance as Code

The transition from HITL to HOTL to HOOTL requires codifying governance into programmable infrastructure. This is not technological automation of human processes—it is architectural redesign of how governance operates.

Policy-as-Code (PaC) translates governance rules into machine-executable logic evaluated at runtime. Traditional governance relies on written guidelines interpreted by humans. PaC embeds rules directly into systems as admission controllers—policies that automatically block violations before they reach production.

Example: Content brand compliance

Traditional approach: "All content must align with brand voice guidelines" → human reviewers apply subjective judgment → inconsistent enforcement

PaC approach: Codified rules automatically reject content containing:

- Competitor brand mentions (list maintained in policy repository)

- Prohibited terminology (profanity, sensitive topics, regulated claims)

- Tone deviation >15% from brand voice baseline (ML-scored against corpus)

- Factual claims without citation (regex pattern matching)

Implementation complexity: 2-4 weeks for basic rules; 8-12 weeks for ML-augmented policies. Enforcement: 100% consistent, 24/7 operation, <50ms latency per request.

The Multi-Layered Governance Stack

Autonomous operations require defense-in-depth through redundant safety mechanisms:

Layer 1: Technical Guardrails

Regex pattern blocking, ML-based content classifiers, PII detection. Catches obvious violations. Latency: <50ms.

Layer 2: Context & Workflow Controls

Dual-control requirements (two agents must agree for high-risk actions), time-based restrictions (no autonomous financial transactions 11pm-6am), value thresholds (human approval required >$10K).

Layer 3: Behavioral Guardrails (Constitutional AI)

AI models trained with ethical principles embedded. Self-critique and revision loops before output. Estimated additional cost: 2-3× base model inference due to multiple reasoning passes (Anthropic Constitutional AI methodology, 2024).

Layer 4: Circuit Breakers

Automatic rerouting to human review when anomaly detected. Escalation triggers: unusual patterns, compliance violations, error thresholds exceeding tolerance.

The architecture is not "remove humans"—it is "redeploy humans from transaction execution to strategic governance."

The Model Context Protocol Gateway

MCP gateways sit between AI agents and enterprise systems, enforcing:

- Identity-based access control (which agents can access which systems)

- Action logging and cryptographic audit trails (C2PA provenance)

- Rate limiting and cost controls

- Veto protocol triggers (human approval for designated action classes)

Implementation: 4-8 weeks for basic MCP gateway; 12-16 weeks for enterprise-grade with full integration.

The Veto Protocol deserves specific attention. It is the bridge between autonomous operations and board-level control. Organizations define action classes requiring human approval:

- Financial transactions >$X threshold

- Customer-facing decisions with regulatory implications

- System modifications to production environments

- Anomalous patterns flagged by circuit breakers

In documented implementations, veto triggers represent a small minority of transaction volume (ResearchGate analysis shows typical range of 5-10% in early deployments, declining to 2-5% as policies mature)—enough to maintain control, low enough to preserve velocity.

The Operationalisation Bridge: From Architecture to Implementation

The transition from HITL to HOTL follows a structured progression:

Phase 1: Readiness Assessment (Weeks 1-4)

40% of organizations fail readiness assessment. Common gaps: inadequate data quality (62%), insufficient executive commitment (31%), concurrent transformations creating change fatigue (28%). Organizations proceeding without meeting thresholds have 89% failure rate. Better to delay 6-12 months and build foundations than proceed prematurely.

Critical success factors:

- Executive sponsorship: CEO/COO >5 hours/month active involvement

- Data readiness: >85% data quality, documented lineage, API access

- Technical infrastructure: Cloud-native platforms, observability tools deployed

- Change capacity: No concurrent enterprise transformations; <15% annual turnover

- Budget allocation: $2-5M for pilot + scale across 36 months

Phase 2: Pilot Selection and Deployment (Months 1-6)

Ideal pilot characteristics: high volume (>500 instances/month), clear decision criteria (can be codified), low catastrophic risk (failure is recoverable), measurable outcomes (baseline metrics tracked), supportive process owner (champion willing to iterate).

Good pilot candidates:

- Invoice processing and approval routing

- Customer service tier-1 inquiry resolution

- Employee expense report validation

- Content moderation and brand compliance checks

- Inventory replenishment recommendations

Poor pilot candidates (account for 65% of the 95% pilot failure rate):

- Strategic decision-making requiring executive judgment

- Creative work demanding subjective aesthetic evaluation

- High-stakes customer decisions with major reputational exposure

- Processes lacking baseline performance metrics

- Mission-critical systems where failure creates safety risks

Phase 3: Scaling and Governance Maturation (Months 7-24)

Organizations achieving top-quartile ROI (600-850%) share common patterns:

- Dedicated AI governance team (5-15 FTEs depending on scale)

- Three-tiered oversight: Board Committee → Executive Council → Operational Team

- Monthly board reporting on deployments, incidents, and risk metrics

- Quarterly third-party audits of AI systems

- Continuous workforce reskilling (evaluators, governance specialists, AI trainers)

The workforce transition reality: Even "augmentation" implementations reduce headcount needs 20-40% over 24 months through natural attrition and redeployment. Organizations claiming "no job losses" create cynicism. Better approach: honest communication about transition timeline (18-36 months), generous severance/reskilling packages, priority hiring for new roles created.

For unionized environments, this timeline extends 12-24 months to accommodate collective bargaining requirements, WARN Act notification periods (60-90 days for mass layoffs), and negotiated transition agreements. Healthcare, manufacturing, and public sector organizations should budget additional time and legal resources for labor relations management.

Touch Stone Law #7

The Law of Architectural Accountability: Organizations that delegate judgment to humans operating at transaction velocity create accountability theater, not accountability infrastructure. True accountability flows through policy specification, cryptographic audit trails, and exception-based human intervention—not through exhausted reviewers approving decisions they lack context to evaluate.

Under Caremark, boards satisfy the duty of loyalty by implementing reporting systems for material risks. Policy-as-Code is the reporting system—it creates real-time, auditable evidence of governance decisions executed at scale. Dashboard reviews every quarter are not oversight systems. They are lagging indicators of systems that may not exist.

The governance gap exists because boards confuse activity with oversight. True governance requires:

- Structural assignment of authority — which decisions can machines make autonomously? Which require human approval? At what thresholds?

- Policy specification in executable form — not guidelines for humans to interpret, but code for systems to enforce

- Continuous monitoring of policy effectiveness — are autonomous systems achieving intended outcomes? Are circuit breakers triggering appropriately?

- Rapid iteration on observed failures — when incidents occur, how quickly can policies be updated and redeployed?

The architectural shift from HITL to HOTL to HOOTL is not about removing humans from operations. It is about deploying human judgment where it creates value: defining strategy, specifying policies, monitoring outcomes, and iterating based on feedback.

The alternative—maintaining HITL architectures while AI systems proliferate—creates compounding liability. The 88% without governance frameworks are not operating efficiently. They are operating outside governance. And when the incident occurs—algorithmic bias lawsuit, regulatory violation, safety failure—the board will face a stark question: Did you establish oversight systems commensurate with the risk?

Under Caremark, conscious failure to monitor material risks breaches the duty of loyalty. The governance gap is no longer theoretical. It is documented, quantified, and creating securities litigation at accelerating rates.

The choice is not whether to govern autonomous systems. The choice is whether to govern them now, while you control the timeline—or later, when regulators, plaintiffs, and insurers control it for you.

Retiring and Establishing: The Governance Reframe

| Retire This Frame | Establish This Frame |

|---|---|

| AI as productivity tool requiring human oversight | AI as operational infrastructure requiring governance architecture |

| Human-in-the-Loop as quality assurance | Policy-as-Code as quality assurance; humans as exception handlers |

| Board oversight through quarterly dashboard review | Board oversight through governance framework approval and incident monitoring |

| Success measured by individual productivity gains | Success measured by organizational velocity without architectural drag |

| Risk management through approval processes | Risk management through circuit breakers and cryptographic audit trails |

| Compliance through human review | Compliance through codified policies and real-time enforcement |

| ROI as median outcome (350%) | ROI as risk-adjusted expected value (52-105% accounting for failure rates) |

The transition is not gradual adoption. It is architectural migration—from systems designed for human-speed execution to systems designed for machine-speed execution with human-defined boundaries.

Organizations that recognize this distinction will capture the 600-850% ROI outcomes documented in top-quartile implementations. Those that continue optimizing HITL architectures will discover, too late, that they have perfected an obsolete model.

The governance gap is closable. The technology exists. The frameworks are documented. The empirical evidence is comprehensive.

What remains is executive will—and board-level recognition that this is not an IT decision. It is a governance decision. And governance decisions belong in the boardroom.

References

- Anthropic. (2024). Constitutional AI: Harmlessness from AI Feedback. Research methodology for multi-layer AI safety.

- Baumeister, R. & Tierney, J. (2011). Willpower: Rediscovering the Greatest Human Strength. Penguin Press.

- Deloitte Global Boardroom Program. (January-February 2025). Governance of AI: A Critical Imperative for Today's Boards, 2nd Edition. Survey of 695 board members and executives across 56 countries.

- Harvard Business School. (2024). Navigating the Jagged Technological Frontier: Field Experimental Evidence of the Effects of AI on Knowledge Worker Productivity and Quality.

- IBM Institute for Business Value. (November 2025). The 2025 CDO Study: The AI Multiplier Effect.

- IBM Think Circle. (Q4 2025). Executive Roundtable on AI ROI Measurement and Organizational Challenges.

- In re Caremark International Inc. Derivative Litigation, 698 A.2d 959 (Del. Ch. 1996).

- Kahneman, D. (2011). Thinking, Fast and Slow. Farrar, Straus and Giroux.

- Knostic AI. (January 2026). The 20 Biggest AI Governance Statistics and Trends of 2025.

- Lucidpress & Demand Metric. (2024). Brand Consistency Impact Study. Analysis of revenue effects from brand presentation inconsistency.

- McKinsey & Company. (2025). The State of AI in 2025: Generative AI's Breakout Year.

- MIT Sloan Management Review. (Summer 2025). Why 95% of Generative AI Pilots Are Failing.

- Partnership on AI. (2016-2025). AI Incident Database (AIID). Comprehensive tracking of AI failures and ethical concerns.

- RAND Corporation. (2024-2025). AI Implementation Study: Success Factors and Failure Patterns.

- ResearchGate. (2024-2025). The Return on Investment (ROI) of Intelligent Automation: Assessing Value Creation via AI-Enhanced Financial Process Transformation. Analysis of 247 organizations across 15 industries.

- Stanford HAI. (2024). Artificial Intelligence Index Report 2024. Comprehensive analysis of AI trends, incidents, and adoption patterns.

- Techne.ai. (February 2026). AI Governance and D&O Liability: What Every Board Needs to Know in 2026. Analysis of US Fortune 1000 AI governance practices and D&O insurance implications.