The Question Your Board Is Not Asking

A governance diagnostic for executive peer groups navigating the authority gap in AI deployment.

Consider the conversation that follows an AI governance failure. Not the press statement. Not the regulatory response. The internal conversation, in the room, when someone asks: who authorized this?

The answer determines whether your organization has a governance problem or a liability problem. In most organizations confronting this question for the first time, the answer reveals something more consequential than either: the authorization was implicit. The AI system was deployed. The decision parameters were assumed. The accountability chain was never drawn.

That is not an oversight. It is an architecture failure. And architecture failures do not announce themselves until they are most costly.

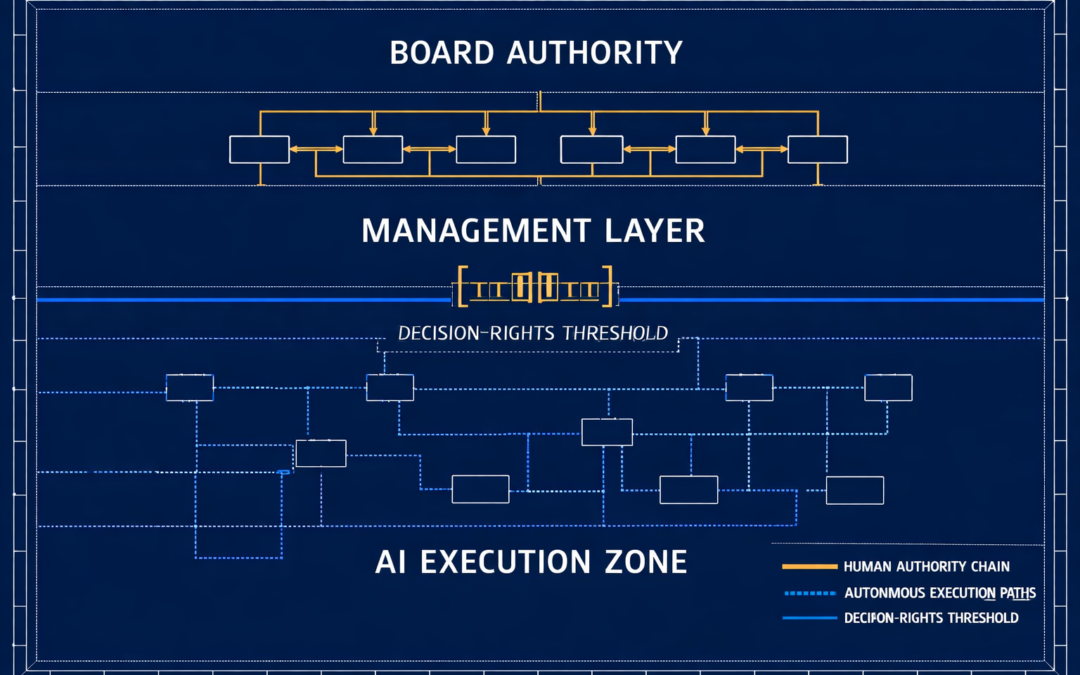

“The question before your board is not whether it approved the AI deployment. The question is whether it approved the decision authority the system now exercises on your behalf.”

The Assumption Your Peer Group Is Making

Raise this in your next executive session and observe the responses. Ask your peers: when your organization approved its most consequential AI deployment, what specifically was authorized? A technology purchase. An operating budget. A vendor contract. In the plurality of cases, the answer covers all three. What it omits is the formal specification of the decision authority the system now exercises.

That missing specification is the decision-rights threshold. Its absence is the structural condition that converts AI deployment from a competitive investment into an unquantified governance exposure. A 64% plurality of boards currently operate without a formal AI governance framework.1 That statistic does not characterize technology immaturity. It describes an authority vacuum at the governance layer of organizations that believe they have already resolved the question.

The working assumption in most peer advisory circles is that governance follows deployment. That once the system is operational, the governance infrastructure catches up. The forensic record of AI governance failures argues the opposite: governance that follows deployment does not govern. It documents. And documentation of outcomes that a board had no structural capacity to influence is not oversight. It is evidence that oversight was absent.

Ask your board four questions without management preparation. Which committee owns AI oversight, and does its charter formally state that mandate? What is the mechanism that surfaces AI risk signals to the board independent of management reporting? What are the three most consequential AI governance decisions reviewed in the past 12 months, and what is the documented institutional response to each? What is each director’s verified AI competency baseline? If these four questions produce silence, the governance architecture does not yet exist.

What Velocity Means for Your Organization Specifically

Here is the structural problem that no governance framework designed for human decision chains can absorb without architectural redesign. A human credit officer makes approximately 80 decisions per day. The AI system that assumed half her portfolio makes 40,000 decisions per hour. The governance framework currently overseeing both was designed for one of them.

This is the velocity mismatch documented in the Deloitte AI Risk Governance Survey as an average 18-month lag between an AI governance failure and board-level visibility.2 That lag is not produced by information suppression or organizational dysfunction. It is the arithmetic of oversight cycles calibrated to human decision velocity applied to machine execution speed. By the time a quarterly audit surfaces an anomaly in a system operating at 40,000 decisions per hour, the anomaly is historical. Liability has already accrued. The accountability chain is reconstructing its own record after the fact.

The practical question for your peer group is not abstract: how many decisions is each AI system in your portfolio making per unit of time, and what is the governance cycle designed to detect and respond to anomalies in that output? If those two figures are not in the same order of magnitude, the framework is not governing the system. It is ratifying outcomes it had no structural capacity to prevent.

“A quarterly audit of a system making 40,000 decisions per hour does not constitute oversight. It constitutes historical documentation of outcomes the board had no structural capacity to influence.”

The Competitive Argument Your Peers Have Not Absorbed

The conversation about AI governance in most executive peer groups defaults to risk framing: regulatory compliance, Caremark exposure, D&O liability. These are legitimate frames and this article does not minimize them. But they consistently obscure a performance argument the evidence supports more directly.

Organizations with structured AI governance platforms are 3.4 times more likely to achieve high operational effectiveness, according to Gartner’s 360-organization benchmark.3 JPMorgan Chase attributed $1.5 billion in value to its structured AI governance implementation in 2024.4 Neither result was produced by compliance discipline. Both were produced by decision-rights clarity: the organizational condition in which AI systems operate within formally defined authority boundaries, generating outcomes that the governance architecture can surface, evaluate, and act on in real time.

The insight most executive peer groups have not yet absorbed is that ungoverned AI environments do not move faster than governed ones. They accumulate liability faster while generating the friction of ad hoc escalation at every decision node lacking a documented mandate. The organizations accelerating deployment velocity are doing so through governance architecture, not despite it. The decision-rights threshold is not a constraint on deployment. It is the structural condition that makes deployment sustainable.

Three Decisions to Assign Before Your Session Closes

The Touch Stone Decision Architecture Framework™ identifies three institutional decisions that close the governance gap. No executive advisory group should conclude a session on AI governance without assigning named ownership to each.

The first is Committee Constitutionalization: not the informal addition of AI oversight to an existing committee’s agenda, but the formal establishment of a board-approved charter that specifies the committee’s mandate, membership qualifications, reporting cadence, and escalation authority. The evidentiary standard under Delaware’s Caremark doctrine is whether the governance mandate was formally documented before an incident occurred.5 Agenda items do not satisfy that standard. Charter amendments do.

The second is the Decision-Rights Boundary Definition: the formal board-level specification of which decisions the organization reserves for human judgment, which it delegates to autonomous AI execution, and which require human-AI collaborative validation before output becomes actionable. That boundary must be documented by system, by decision type, and by risk tier. Without it, every AI system in the portfolio operates under implicit default parameters. Implicit defaults are not defensible in litigation and are not competitively sustainable.

The third is an Independent AI Risk Pipeline: a structured mechanism through which AI risk signals reach the board without requiring management to surface, filter, or frame them first. The 12 to 18-month visibility lag documented in the forensic record is not produced by technology limitations. It is produced by information pipelines that route through management’s organizational interests before reaching the governance layer. A risk pipeline that depends on management to activate it is not independent oversight. It is notification infrastructure for risks management has already decided to escalate.

Before your group concludes, assign each of these three decisions to a named owner with a completion date: Committee Constitutionalization, Decision-Rights Boundary Definition, Independent Risk Pipeline. Ask each owner to return with a documented gap assessment: what currently exists, what the evidentiary and competitive standard requires, and what the specific institutional action closes the gap. That assignment is not a governance exercise. It is a fiduciary one.

1 NACD Board Practices Report, 2025.

2 Deloitte AI Risk Governance Survey, 2025.

3 Gartner AI Governance Study, 360-Organization Benchmark, 2024.

4 JPMorgan Chase Annual Report, 2024.

5 In re Caremark Int’l Inc. Derivative Litigation, Del. Ch. 1996; In re SolarWinds Corp. Securities Litigation, S.D.N.Y., 2023.